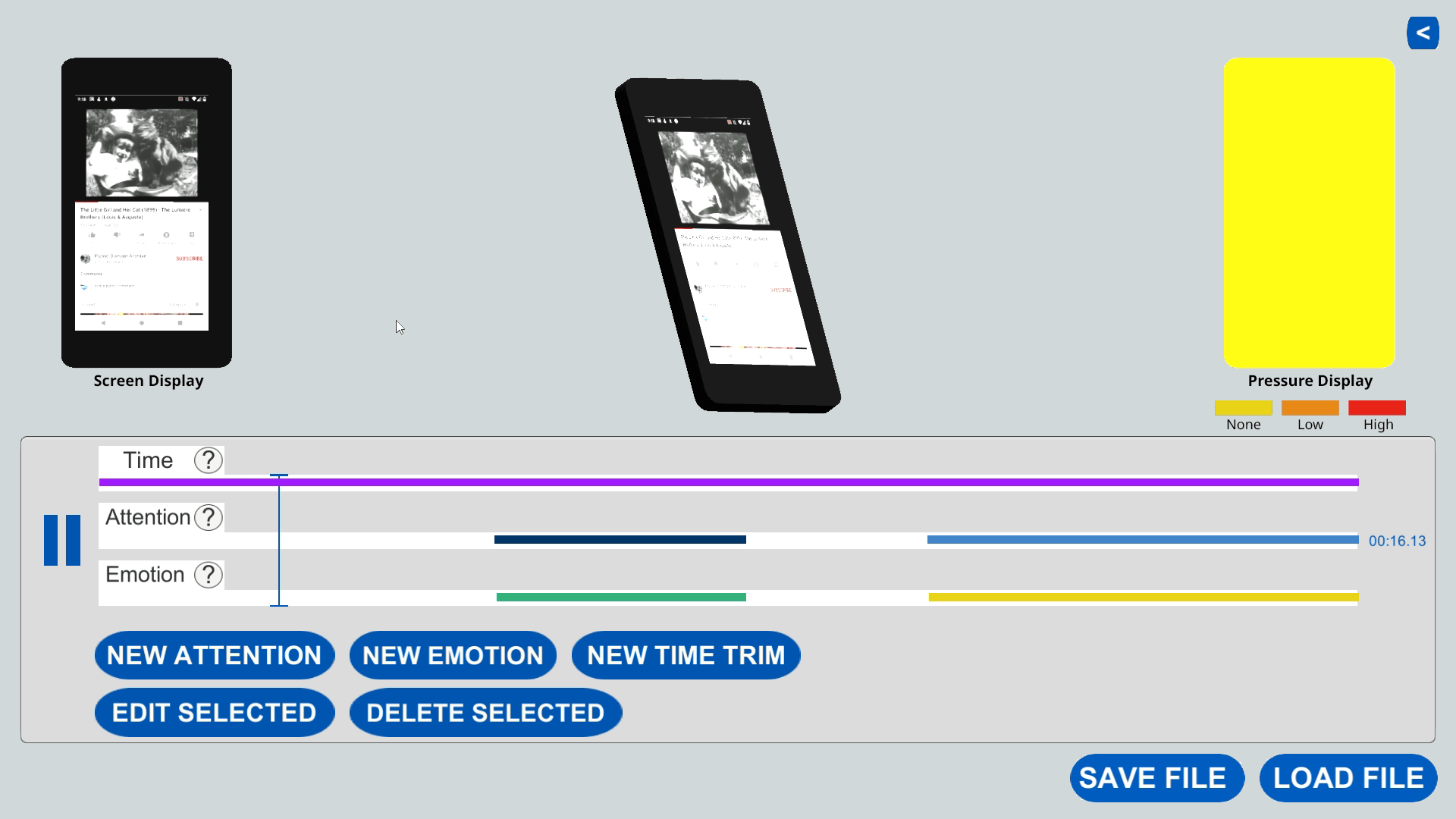

Remotely mirror the phone interactions of your users

Remotion enables richer context sharing from remote users, especially during usability studies. Using this application, you can replay the behavior of people visiting your website or application, along with the screen capture. With careful observation, you could better learn about participating users' intent, posture and grip, attention, emotional state, and habits. This website provides instructions for setting up Remotion, and hosts add-ons for Remotion that enable more functionality.

How does it work?

Analyze and annotate motion behavior

A remote user's hand motions can reflect contextual information about their surroundings. Imagine a scenario where a user visits a recipe website and scrolls to the ingredient list. The phone is placed at an angle in portrait mode. That may signal that they are collecting the ingredients and plan to follow that recipe. On the other hand, the same scrolling action but then the phone is placed sideways face-down may indicate a lack of interest in the recipe.

None of this contextual information is captured on the screen, yet the different movements resulting from how a user handles their device provide more clues about the situation than what is displayed on the screen.